Executive Summary

April 2026 was the month AWS moved from selling AI infrastructure to shipping AI products. Three headline announcements at the What’s Next event on April 28 drove that shift:

- Amazon Quick launched as a consumer AI assistant with a free tier – no AWS account required.

- Amazon Connect restructured into four separate agentic AI products across supply chain, hiring, customer service, and healthcare.

- AWS and OpenAI expanded their partnership, putting GPT-5.5 and GPT-5.4 on Amazon Bedrock.

For FinOps and engineering teams, three changes need action now:

- EC2 Capacity Block prices are up 15% across all GPU and Trainium families. Reserved GPU compute is more expensive and the April pricing review has passed.

- Bedrock granular cost attribution is live. AI spend can now be charged back to individual IAM principals, teams, and projects.

- Database Savings Plans now cover OpenSearch and Neptune – new commitment opportunities that did not exist last month.

All pricing figures in this document require verification at aws.amazon.com/pricing before acting on them.

What’s Next with AWS – April 28 Event: The Three Headline Announcements

On April 28, 2026, AWS held its What’s Next with AWS virtual event, where CEO Matt Garman, SVP Colleen Aubrey, and CMO Julia White presented the largest cluster of AI product announcements since re:Invent 2025. Three announcements stood out for cloud engineers and FinOps teams.

1. Amazon Quick: AWS’s AI Assistant Goes Consumer

Amazon Quick is AWS’s AI assistant for work. It connects to your apps, learns your preferences, and takes action on your behalf. Prior to April 28, Quick was invite-only and enterprise-focused. The April 28 launch opened it up significantly.

What launched on April 28:

- Desktop app (Preview) for macOS and Windows – stays connected to local files, calendar, and communications without a browser.

- Free and Plus pricing plans – no AWS account required to sign up. Use a personal email or Google, Apple, GitHub, or Amazon credentials.

- Visual asset generation – create documents, presentations, infographics, and images directly from the chat interface.

- New integrations – Google Workspace, Zoom, Airtable, Dropbox, and Microsoft Teams added as native connectors.

- Custom app builder (Preview) – build intelligent apps, dashboards, and web pages using natural language.

The cost implication: Quick’s Free tier introduces AWS AI capabilities to users who previously required enterprise Bedrock access. Teams running Bedrock-powered internal tools should evaluate whether Quick replaces custom builds, which carry per-token Bedrock costs.

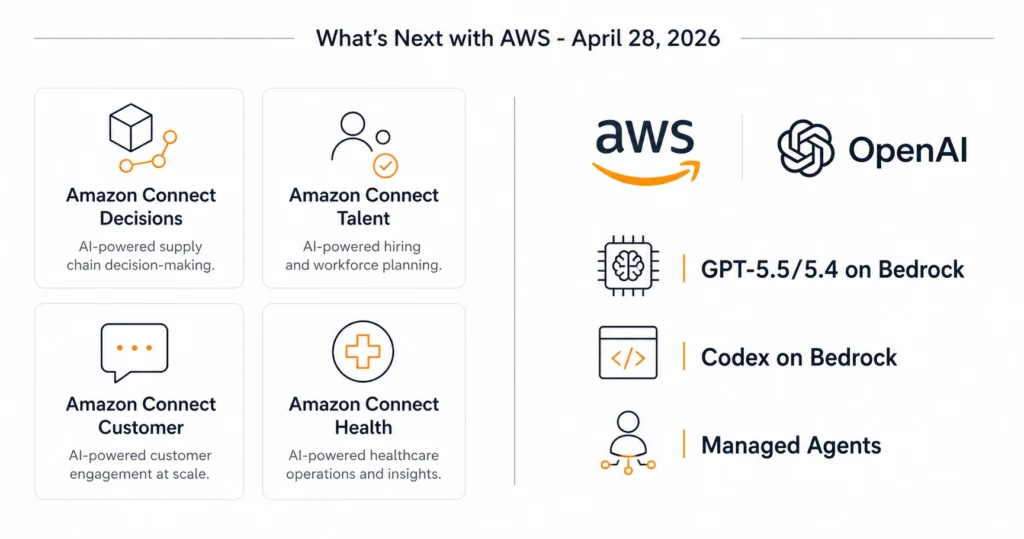

2. Amazon Connect Splits into Four Agentic AI Products

Amazon Connect – previously a single contact center product – has been restructured into four specialized agentic AI solutions. Each targets a distinct business function.

| Product | Focus Area | Key Capability | Status |

|---|---|---|---|

| Amazon Connect Decisions | Supply chain | Proactive planning using AI – combines 30 years of Amazon operational science with 25+ supply chain tools | GA |

| Amazon Connect Talent | Hiring | AI-led interviews, science-backed assessments, consistent evaluation across candidates | Preview |

| Amazon Connect Customer | Customer service | Previously ‘Amazon Connect’ – intelligent CX across voice, chat, digital; configurable in weeks without code | GA |

| Amazon Connect Health | Healthcare | Agentic patient verification, appointment management, ambient documentation, medical coding | GA |

The restructuring matters for procurement: existing Amazon Connect contracts and reserved capacity apply to Connect Customer. The other three products carry separate pricing. Teams with cross-department Connect deployments should audit which product line each use case falls under before the next billing cycle.

3. AWS and OpenAI Partnership Expands: GPT Models Come to Bedrock

AWS and OpenAI announced an expanded partnership on April 28. Three capabilities entered limited preview:

- OpenAI models on Amazon Bedrock (Limited preview) – GPT-5.5 and GPT-5.4 accessible through the standard Bedrock API with unified security, governance, and cost controls. No separate OpenAI account required.

- Codex on Amazon Bedrock (Limited preview) – OpenAI’s coding agent available within AWS environments. Authenticate with AWS credentials, run inference through Bedrock infrastructure, and count Codex usage toward AWS cloud commitments.

- Amazon Bedrock Managed Agents powered by OpenAI (Limited preview) – Build production-ready OpenAI-powered agents using AWS infrastructure. Built on the OpenAI harness for faster execution and reliable long-running task steering.

Cost note: OpenAI models on Bedrock are billed through Bedrock’s standard per-token model. GPT-5.5 and GPT-5.4 pricing had not been published at time of writing.

AI and Machine Learning: All the April 2026 Launches on Amazon Bedrock and SageMaker

Claude Opus 4.7 Now Available on Amazon Bedrock (April 20)

Anthropic’s Claude Opus 4.7 launched on Amazon Bedrock on April 20, 2026. It is the most capable model in the Opus family and the first to run on Bedrock’s next-generation inference engine.

Performance benchmarks at launch:

- 64.3% on SWE-bench Pro – the most rigorous software engineering evaluation available.

- 87.6% on SWE-bench Verified – strong indicator of real-world coding task completion.

Infrastructure features on Bedrock:

- Dynamic capacity allocation – no pre-provisioned inference capacity required.

- Adaptive thinking – Claude allocates thinking token budgets based on request complexity, not a fixed budget. This changes cost behavior versus Sonnet.

- 1M token context window – full context available at launch.

- High-resolution image support – improved accuracy on charts, dense documents, and screen UIs.

Available at launch in: US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). Up to 10,000 requests per minute per account per region.

Anthropic Training on AWS Trainium and Graviton – Deep Silicon Partnership

Announced the week of April 27, Anthropic is now training its most advanced foundation models on AWS Trainium and Graviton infrastructure. This is a silicon-level co-engineering relationship with Annapurna Labs – not a standard cloud customer arrangement.

Claude Cowork also launched in Amazon Bedrock the same week. It brings collaborative AI capabilities to enterprise builders – enabling teams to work alongside Claude within their existing Bedrock environment, with data kept in AWS. This is separate from the standard Claude API.

Meta Signs Agreement to Deploy AWS Graviton at Scale

Meta signed an agreement to deploy tens of millions of AWS Graviton cores for CPU-intensive agentic AI workloads – including real-time reasoning, code generation, search, and multi-step task orchestration. This is one of the largest single Graviton commitments announced publicly. The deployment signals continued enterprise demand for non-GPU AI compute, particularly for inference-heavy agentic pipelines where throughput per dollar on Graviton outperforms on-demand GPU at the same scale.

Granular Cost Attribution for Amazon Bedrock

Amazon Bedrock now automatically attributes inference costs to the specific IAM principal that made each API call. Results flow into AWS Cost and Usage Reports (CUR 2.0). Teams can aggregate costs by team, project, or cost center using IAM principal tags and session tags.

This is the first native Bedrock feature that enables chargeback without custom logging middleware. Previously, teams running multiple projects on Bedrock had no API-level cost attribution – all inference spend aggregated under the same line item.

For a broader look at how to reduce AWS AI spend including Bedrock, the Usage.ai guide on AWS Cost Optimization covers Savings Plans, commitment automation, and cost control strategies relevant to AI workloads.

Amazon Bedrock AgentCore: Managed Harness, CLI, and Coding Skills

Amazon Bedrock AgentCore added three new capabilities in late April:

- Managed harness (Preview) – Define an agent by specifying a model, system prompt, and tools, then run it immediately with no orchestration code. When ready for full control, export the harness as Strands-based code.

- AgentCore CLI – Deploy agents with infrastructure-as-code governance. AWS CDK supported at launch, Terraform coming later in 2026. Available in 14 AWS regions at no additional charge.

- AgentCore skills for coding assistants – Pre-built skills targeting common coding assistant patterns.

Amazon SageMaker AI: Optimized Generative AI Inference Recommendations

SageMaker AI can now automatically identify optimized deployment configurations for generative AI models – including instance type, container, and inference parameters. The feature removes the manual tuning step for getting a model into production at efficient cost and latency.

Compute and Networking: AWS Interconnect GA, New EC2 Instances, and EKS Hybrid

AWS Interconnect Reaches General Availability (April 20)

AWS Interconnect launched two managed private connectivity capabilities to GA on April 20, 2026.

AWS Interconnect – Multicloud provides Layer 3 private connections between AWS VPCs and other cloud providers. Google Cloud is available now. Azure and Oracle Cloud Infrastructure are coming later in 2026. Traffic flows over the AWS global backbone and the partner cloud’s private network – never over the public internet. Features include MACsec encryption, multi-facility resiliency, and CloudWatch monitoring. AWS published the underlying specification on GitHub under Apache 2.0, allowing any cloud provider to become an Interconnect partner.

AWS Interconnect – Last Mile simplifies high-speed private connections from branch offices, data centers, and remote locations to AWS through existing network providers. It provisions four redundant connections across two physical locations automatically, configures BGP routing, and activates MACsec encryption and Jumbo Frames by default. Bandwidth from 1 Gbps to 100 Gbps adjustable from the console without reprovisioning. Launched in US East (N. Virginia) with Lumen as the initial partner.

Amazon EC2 C8in and C8ib Instances – Generally Available

Two new compute-optimized EC2 instance families reached GA in April 2026:

- C8in – Powered by custom 6th-generation Intel Xeon Scalable processors and 6th-generation AWS Nitro cards. Up to 43% higher performance versus C6in. 600 Gbps network bandwidth – the highest of any enhanced networking EC2 instance. Scales to 384 vCPUs.

- C8ib – Up to 300 Gbps EBS bandwidth, the highest of any non-accelerated compute instance. Designed for storage-intensive, network-heavy workloads requiring compute-optimized memory profiles.

Amazon EKS Hybrid Nodes Gateway – Automated Hybrid Kubernetes Networking

Amazon EKS now offers the EKS Hybrid Nodes gateway, which automates networking between an EKS cluster VPC and Kubernetes pods running on EKS Hybrid Nodes (on-premises or edge). The gateway eliminates the requirement to make on-premises pod networks routable or to coordinate network infrastructure changes. It automatically enables pod-to-pod traffic across cloud and on-premises environments, control plane-to-webhook communication, and connectivity for AWS services like Application Load Balancers. Available at no additional charge.

Storage: Lambda Gets Native S3 File System Mounting

AWS Lambda Functions Can Now Mount Amazon S3 Buckets as File Systems

Lambda functions can now mount Amazon S3 buckets as file systems using S3 Files. Functions can perform standard file operations – read, write, append – without downloading data for processing first. Built on Amazon EFS, S3 Files provides file system semantics with S3’s scalability, durability, and cost-effectiveness.

Multiple Lambda functions can connect to the same S3 file system simultaneously, sharing data through a common workspace. This is particularly relevant for AI and machine learning workloads where agents need to persist memory and share state across pipeline steps – a use case that previously required either DynamoDB-backed state management or custom S3 object logic.

Cost consideration: S3 Files usage incurs standard S3 data transfer and EFS pricing on top of Lambda execution costs. Model the cost against your current pattern of S3 GetObject calls before switching.

Databases: Aurora Serverless Gets Faster, Database Savings Plans Expand

Amazon Aurora Serverless: Up to 30% Better Performance and Smarter Scaling

Amazon Aurora Serverless updated to platform version 4 with up to 30% better performance versus the previous version. The enhanced scaling algorithm now handles workloads where multiple tasks compete for resources simultaneously – busy APIs and agentic AI applications with burst activity and long idle windows are the primary targets.

The update also improves scale-to-zero behavior, making Aurora Serverless more cost-effective for development databases and low-traffic workloads. All improvements are available at no additional cost in platform version 4.

Database Savings Plans Now Support Amazon OpenSearch Service and Amazon Neptune Analytics

Database Savings Plans expanded coverage to include Amazon OpenSearch Service and Amazon Neptune Analytics. The plan provides up to 35% savings on eligible serverless and provisioned instance usage with a one-year commitment. Savings Plans apply automatically regardless of engine, instance family, size, or AWS region – making them operationally simpler than Reserved Instances for teams running multiple database engines.

The full guide to how Database Savings Plans work, which engines are covered, and when to use them over Reserved Instances is on Usage.ai: AWS Database Savings Plans Explained.

Developer Tools: AWS Transform in IDEs, Aurora DSQL PHP Connector, ECR Updates

AWS Transform Now Available in Kiro and VS Code

AWS Transform – the agentic migration and modernization factory – is now accessible directly in Kiro (as a Power) and VS Code (as an extension). Supported transformations include Java, Python, and Node.js version upgrades and AWS SDK migrations. Job state is shared across the AWS web console, CLI, and IDE, meaning a migration started in the console can be inspected and continued from VS Code without context loss.

Aurora DSQL Connector for PHP

A new Aurora DSQL Connector for PHP (PDO_PGSQL) simplifies PHP application development on Aurora DSQL. The connector handles IAM token generation, SSL configuration, connection pooling, and opt-in optimistic concurrency control retry with exponential backoff automatically. PHP developers no longer need to build custom authentication middleware for Aurora DSQL.

Amazon ECR Pull Through Cache: OCI Referrer Discovery and Sync

ECR’s pull through cache now automatically discovers and syncs OCI referrers – image signatures, SBOMs, and attestations – from upstream registries into private repositories. End-to-end image signature verification and SBOM discovery workflows now work without client-side workarounds. This is relevant for teams running supply chain security tooling (Sigstore, Cosign) in CI/CD pipelines backed by ECR.

Security: Post-Quantum TLS in Secrets Manager, VPC Encryption Controls Pricing

AWS Secrets Manager Now Supports Hybrid Post-Quantum TLS

Secrets Manager now supports hybrid post-quantum key exchange using ML-KEM (CRYSTALS-Kyber) to protect secrets against both current and future quantum computing threats. This protection is automatically enabled in Secrets Manager Agent 2.0.0+, Lambda Extension v19+, and CSI Driver 2.0.0+. No configuration change required for teams running these versions.

VPC Encryption Controls: Free Preview Ends March 1, 2026

VPC Encryption Controls transitioned from free preview to a paid feature on March 1, 2026. The feature audits and enforces encryption-in-transit for all traffic flows within and across VPCs in a region. Monitor mode detects unencrypted traffic; enforce mode prevents it. Teams that adopted VPC Encryption Controls during preview are now being billed.

Cost Management and FinOps: What April 2026 Means for Cloud Spend

EC2 Capacity Blocks for ML: 15% Price Increase Across All Regions

AWS increased pricing for EC2 Capacity Blocks for ML by approximately 15% across all regions. The increase affects the P5en, P5e, P5, and P4d GPU instance families, as well as Trn2 and Trn1 Trainium instances. Example impact: the p5e.48xlarge rose from approximately $34.61/hr to $39.80/hr in most regions. In US West (N. California), the same instance rose from $43.26/hr to $49.75/hr.

AWS attributed the increase to supply and demand patterns for GPU capacity. A spokesperson confirmed that the adjustment reflects expected supply/demand for the quarter. The next pricing review was set for April 2026 at the time of the January increase.

This increase affects organizations reserving dedicated GPU capacity for large-scale ML training. On-demand and Savings Plan pricing was not changed.

For context on when to use Capacity Blocks versus Savings Plans for GPU workloads, see the Usage.ai guide on AWS Savings Plans vs Reserved Instances which covers the full commitment comparison.

Bedrock Granular Cost Attribution: The FinOps Impact

The Bedrock granular cost attribution feature (covered in Section 2) is the most significant FinOps-relevant AWS launch of April 2026 for teams running AI workloads. Before this feature, all Bedrock inference costs in an AWS account aggregated to a single line item in Cost Explorer, making it impossible to allocate AI spend to individual teams or projects without external logging.

The feature now automatically captures the IAM principal for each Bedrock API call and surfaces this in CUR 2.0. Organizations with 10 or more teams sharing a Bedrock deployment can now run chargeback or showback against actual consumption – a prerequisite for FinOps maturity on AI spend.

Recommended action: enable CUR 2.0 if not already active, apply IAM principal tags and session tags to your Bedrock callers, and validate that the cost attribution is appearing in Cost Explorer before the end of the month. Attribution does not backfill – historical spend prior to enabling the feature will not have IAM-level granularity.

AWS Microcredentials Now Free

AWS microcredentials are now available at no cost through AWS Skill Builder in all countries where the platform is offered. Microcredentials are hands-on assessments in simulated live AWS environments – different from multiple-choice certifications. Removing the cost barrier makes it practical for teams to formally validate cloud skills without budget approval cycles.

.png)