Cloud costs rarely grow linearly with product usage. Many teams discover that as their infrastructure scales, cloud spending increases faster than users, requests, or transactions. This is where cloud unit economics becomes essential.

A company might double its users but see cloud costs triple. An API platform might increase requests by 30% while infrastructure spend jumps 70%. Without the right metrics, these inefficiencies stay hidden inside a single line item called “cloud spend.”

That’s why modern DevOps and FinOps teams track cloud unit economics, the cost of delivering a single unit of product value, such as a user, API request, transaction, or workload. Instead of focusing only on total infrastructure spend, teams measure cost per unit of output, revealing whether their systems become more efficient or more expensive as they scale.

This shift from total cost to cost efficiency per workload is becoming a core FinOps practice.

In this guide, we’ll break down what cloud unit economics is, how to calculate it, and which metrics matter most for DevOps and FinOps teams as your cloud environment scales.

What Is Cloud Unit Economics?

Cloud unit economics is the practice of measuring how much cloud infrastructure costs to deliver a single unit of product value. Instead of focusing only on total cloud spend, organizations evaluate infrastructure efficiency by calculating metrics such as cost per user, cost per API request, cost per transaction, or cost per workload.

The goal is to understand whether cloud infrastructure becomes more efficient or less efficient as a product scales. If usage grows faster than infrastructure costs, unit economics improve. If infrastructure costs grow faster than product usage, unit economics deteriorate.

For example, consider a SaaS platform that spends $200,000 per month on cloud infrastructure and serves 500,000 active users. The cloud cost per user would be:

Cloud Cost per User = Total Cloud Spend ÷ Active Users

In this case: 200,000 ÷ 500,000 = 0.40

The company spends $0.40 per active user to run its platform.

Tracking this metric over time reveals whether infrastructure efficiency improves as the platform grows. If the user base doubles while infrastructure costs increase only slightly, cost per user decreases, indicating healthy cloud unit economics. However, when infrastructure costs rise faster than usage, it often reveals inefficiencies such as excess capacity, architectural limitations, or poorly optimized cloud purchasing strategies.

Also read: 18 Proven Ways to Cut 30–50% of Your Cloud Bill

Common Cloud Unit Economics Metrics

The exact unit used in cloud unit economics depends on how a product delivers value and how workloads consume infrastructure. Some of the most common metrics include:

- Cost per active user: Common for SaaS platforms. This metric measures how much cloud infrastructure is required to support each user of the product. If the user base grows while infrastructure costs increase slowly, the cost per user decreases, indicating efficient scaling.

- Cost per API request: Widely used by API platforms and developer tools. Each request consumes compute, networking, and sometimes database resources. Tracking cost per request helps teams evaluate how efficiently their architecture handles traffic and whether improvements like caching or optimized compute pricing reduce infrastructure cost per call.

- Cost per transaction: Important for fintech, payments, and e-commerce systems. This metric measures how much infrastructure is required to process each transaction. Since margins in these industries are often sensitive to processing costs, inefficient infrastructure can quickly erode profitability as transaction volume grows.

- Cost per workload or job: Used by data platforms, batch processing systems, and machine learning pipelines. Instead of tracking users or requests, teams measure the cost of running specific workloads such as data pipelines, training jobs, or analytics queries.

- Cost per compute task or inference: Increasingly important for AI and ML platforms. Teams track the infrastructure cost required to run each model inference or compute task to understand whether their AI workloads scale efficiently.

Most mature organizations track multiple unit economics metrics simultaneously. For example, a SaaS platform might monitor cost per user alongside cost per API request and background job costs. Together, these metrics provide a clearer view of how infrastructure decisions impact efficiency as the platform grows.

Why Cloud Unit Economics is an Important KPI for DevOps and FinOps Teams

Cloud unit economics helps DevOps and FinOps teams understand whether infrastructure spending is creating proportional product value. Several important operational decisions depend on understanding unit economics:

- Evaluating infrastructure efficiency: Cloud unit economics reveals whether systems are becoming more efficient as they scale. Ideally, infrastructure costs grow more slowly than product usage. When this happens, the cost per user, request, or workload decreases, indicating that the platform is scaling efficiently.

- Detecting architectural inefficiencies: Unit metrics often expose issues that total spend dashboards cannot. Inefficient microservices, excessive database queries, poorly designed data pipelines, or unnecessary compute usage can all drive up cost per workload even if overall spend appears reasonable.

- Improving engineering decision-making: When teams understand the cost impact of each workload, architectural decisions can be evaluated not only for performance and reliability but also for cost efficiency. Changes such as caching strategies, workload consolidation, or improved autoscaling policies can directly improve unit economics.

- Connecting engineering metrics to business outcomes: Unit economics creates a shared language between engineering, finance, and leadership. Instead of discussing abstract infrastructure costs, teams can measure how infrastructure spending affects the cost of serving each user, request, or transaction.

- Supporting long-term cloud cost optimization: Sustainable cost optimization requires more than reducing waste. It requires ensuring that infrastructure spending scales efficiently with the product itself. Cloud unit economics provides the metrics needed to track and maintain that efficiency over time.

For organizations running large-scale cloud environments, these metrics become a key part of mature FinOps practices, helping teams move beyond simple cost visibility toward measurable infrastructure efficiency.

How to Calculate Cloud Unit Economics

Calculating cloud unit economics means determining how much cloud infrastructure cost is required to deliver one unit of product value. At its simplest, cloud unit economics follows a straightforward formula:

Cloud Unit Cost = Total Infrastructure Cost ÷ Total Product Units Delivered

Where the “unit” represents the core output of your system, such as:

- active users

- API requests

- transactions

- compute jobs

- AI inferences

- data processed

For example, consider an API platform running on cloud infrastructure:

- Monthly cloud spend for API services: $120,000

- API requests processed that month: 80 million

The cloud unit economics for that system would be:

Cost per API request = $120,000 ÷ 80,000,000

Cost per request = $0.0015

This number becomes a baseline efficiency metric. If the platform scales to 160 million requests while infrastructure costs rise to only $150,000, the cost per request drops to $0.00094, indicating improved infrastructure efficiency.

Also read: AWS Cost Explorer: Advanced Guide for FinOps Teams

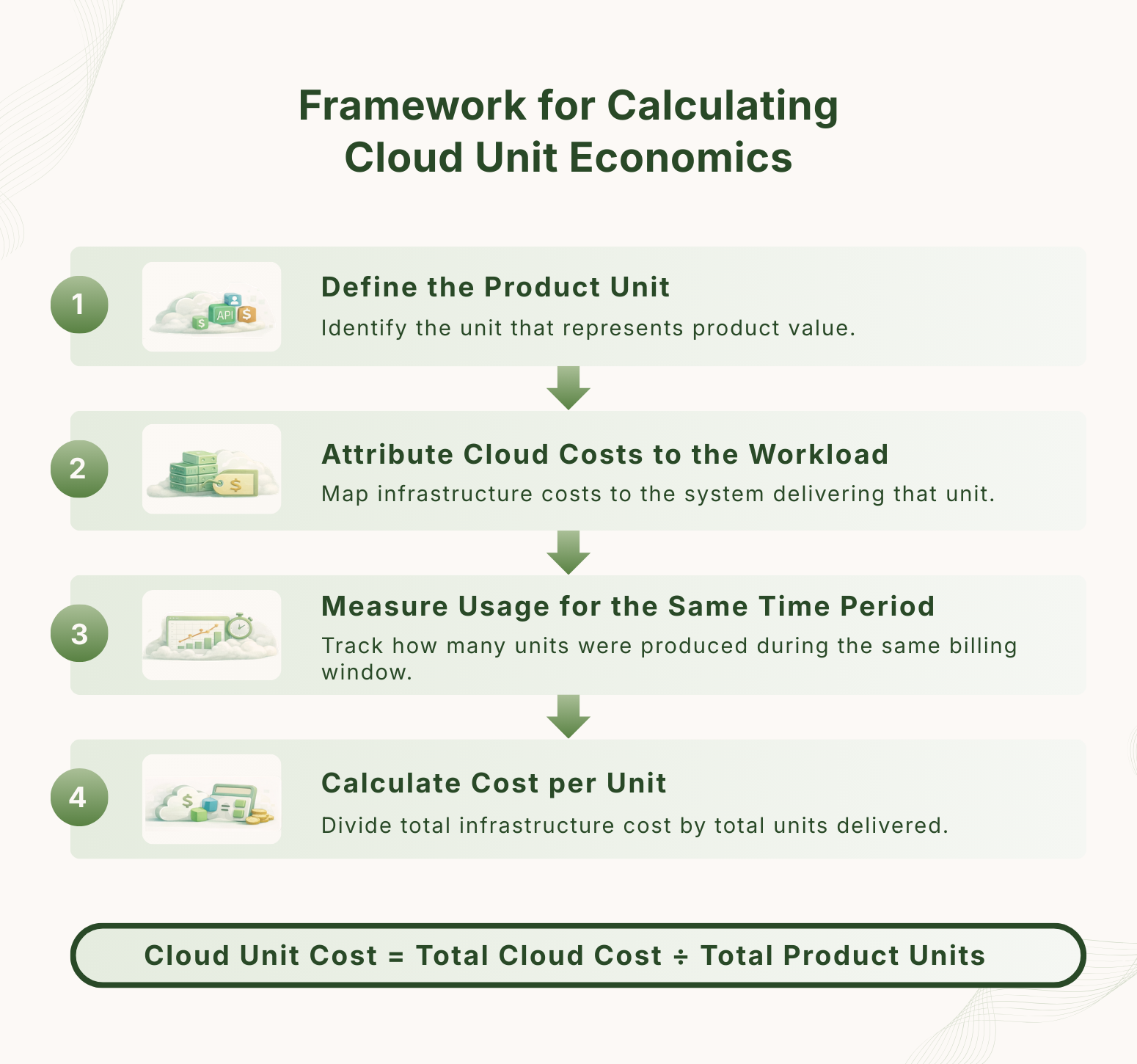

Step-by-Step Framework for Calculating Cloud Unit Economics

In real production environments, calculating unit economics typically involves four steps.

1. Define the product unit

Start by identifying the unit that represents value delivered by your system. This varies depending on the product architecture:

- SaaS platform – active users

- API platform – requests processed

- fintech system – transactions processed

- data platform – datasets or queries processed

- AI systems – model inference requests

Choosing the correct unit is critical because it determines how accurately infrastructure efficiency can be measured.

2. Attribute cloud costs to the relevant workload

Next, determine the portion of cloud infrastructure supporting that workload. This usually includes compute resources, storage systems, networking costs and managed services such as databases or queues.

In mature environments, cost attribution relies on tagging strategies, service ownership models, and billing APIs to map infrastructure spend to specific systems.

3. Measure usage for the same time period

Usage metrics must align with the same billing period used to measure infrastructure costs. Teams typically pull this data from application monitoring systems, analytics pipelines, or service telemetry.

4. Calculate cost per unit

Finally, divide the attributed infrastructure cost by the number of product units generated during that period. This produces a unit-level efficiency metric such as:

- cost per user

- cost per API request

- cost per transaction

- cost per inference

Tracking this value over time allows teams to see whether architectural changes, scaling strategies, or infrastructure pricing models improve or degrade efficiency.

Also read: What Is Cloud Cost Governance: Framework, Best Practices, and KPIs

Factors That Impact Cloud Unit Economics

Cloud unit economics is a result of multiple technical and financial decisions across infrastructure, architecture, and purchasing strategy. Understanding what drives unit economics helps DevOps and FinOps teams identify where efficiency improvements will have the greatest impact. Some of the most common factors include:

- Architecture design: The way systems are designed has a direct impact on infrastructure efficiency. Poorly optimized microservices, excessive inter-service communication, inefficient database queries, or heavy data movement between services can dramatically increase compute and networking costs. Well-designed architectures minimize unnecessary processing and make better use of available resources.

- Compute utilization: Underutilized infrastructure is one of the biggest drivers of poor unit economics. Idle instances, oversized virtual machines, and inefficient autoscaling policies can result in paying for far more capacity than the workload actually requires. Improving utilization through better scaling strategies and instance selection can significantly lower cost per workload.

- Workload scaling patterns: Some systems scale predictably, while others experience volatile demand. Workloads that spike unpredictably often rely heavily on on-demand infrastructure, which is the most expensive pricing model. Efficient scaling strategies, such as autoscaling, containerization, or workload scheduling can reduce unnecessary capacity and improve unit economics.

- Infrastructure pricing models: Cloud providers offer multiple pricing models for compute resources. On-demand pricing offers flexibility but comes at a higher cost, while commitment-based models such as long-term capacity commitments provide significant discounts in exchange for predictable usage. Organizations that rely entirely on on-demand infrastructure often end up paying substantially more per workload.

- Commitment coverage: Many cloud environments run large portions of their infrastructure on compute services. Increasing commitment coverage, where more workloads run on discounted capacity instead of on-demand resources can significantly lower the cost per request, user, or transaction.

- Operational efficiency: Operational practices such as continuous monitoring, rightsizing, and cost-aware engineering also influence unit economics. Organizations that regularly evaluate infrastructure efficiency and adjust resources as workloads evolve tend to maintain healthier unit metrics over time.

Because cloud environments evolve quickly, these factors rarely stay constant. New features, product growth, architectural changes, or shifting traffic patterns can all affect how efficiently infrastructure delivers value.

Best Practices for Improving Cloud Unit Economics

The following engineering practices tend to have the largest impact on cost per workload.

1. Increase compute utilization

Low CPU and memory utilization is one of the most common causes of poor cloud unit economics.

Many production environments run instances operating at 20–40% utilization while still paying for full capacity. Techniques such as instance rightsizing, workload bin-packing, and container scheduling can increase utilization and reduce the number of instances required to handle the same traffic.

2. Optimize instance selection and workload placement

Different instance families offer different price-to-performance characteristics. Compute-optimized, memory-optimized, or ARM-based instances can significantly change cost per workload depending on application behavior. Benchmarking workloads and selecting the correct instance types often improves unit economics without requiring architectural changes.

3. Reduce infrastructure overhead in service architectures

Distributed systems frequently introduce hidden infrastructure costs through excessive service-to-service communication, repeated database queries, or inefficient serialization and network calls.

Reducing unnecessary network hops, improving caching layers, and batching operations can significantly reduce compute and networking consumption per request.

4. Tune autoscaling policies for workload signals

Autoscaling configurations that rely on static CPU thresholds often lead to delayed scaling or excessive capacity buffers. More efficient scaling strategies use request rates, queue depth, or latency metrics to better align infrastructure provisioning with real workload demand.

5. Optimize compute pricing through commitment strategies

Compute workloads typically represent the largest portion of cloud infrastructure spend. Commitment-based pricing models allow organizations to access lower compute rates when workloads are predictable. Increasing commitment coverage for stable workloads can significantly reduce effective hourly compute costs compared to on-demand infrastructure.

6. Continuously measure cost per workload

Improvements in architecture or pricing strategy should be validated using unit-level efficiency metrics. Tracking metrics such as cost per request, cost per user, or cost per job over time allows teams to detect regressions early and verify that optimization efforts actually improve infrastructure efficiency.

Organizations that consistently apply these practices tend to see cloud unit economics improve as their systems scale.

Also read: 10 Biggest Cloud Cost Optimization Challenges (and How to Solve Them)

Cloud Unit Economics vs Cloud Cost Optimization

Cloud unit economics and cloud cost optimization are closely related, but they solve different problems in cloud financial management.

Cloud cost optimization focuses on reducing unnecessary infrastructure spending. This typically involves identifying idle resources, rightsizing instances, eliminating waste, and purchasing infrastructure using more efficient pricing models. The goal is to lower the overall cloud bill without affecting system performance or reliability.

Cloud unit economics, on the other hand, focuses on infrastructure efficiency relative to product output. Instead of asking how much the organization spends on cloud infrastructure, teams ask how much it costs to deliver each unit of value, such as a user session, API request, transaction, or workload.

In practice, the most effective cloud strategies combine both approaches. Cost optimization helps organizations eliminate waste and reduce unnecessary spending, while unit economics ensures that infrastructure efficiency improves as product usage grows. Together, they provide DevOps and FinOps teams with a complete framework for managing cloud infrastructure at scale.

Also read: What Is the Difference Between Cloud Cost Optimization and Cloud Cost Management?

Improve Your Cloud Unit Economics with Usage.ai

Managing cloud unit economics becomes significantly easier when infrastructure pricing and commitment coverage are continuously optimized. Usage.ai helps organizations increase commitment coverage, automate commitment purchasing, and reduce the risk associated with long-term cloud commitments.

Usage.ai analyzes billing and usage data, generates updated commitment recommendations every 24 hours, and automates commitment optimization so teams can capture infrastructure discounts without constant manual analysis. In cases where commitments become underutilized, the platform can return cashback to customers according to contract terms, helping organizations safely increase commitment coverage and improve cost efficiency.

If you’re looking to improve your cloud unit economics, we can help. Run a cloud savings analysis with Usage.ai to see where your environment could achieve higher commitment coverage and more efficient infrastructure pricing.

Frequently Asked Questions

1. What is cloud unit economics?

Cloud unit economics measures the cost required to deliver a unit of product value using cloud infrastructure. Instead of tracking total cloud spend, teams calculate metrics like cost per user, cost per API request, or cost per transaction to evaluate how efficiently their infrastructure scales.

2. Why is cloud unit economics important?

Cloud unit economics helps DevOps and FinOps teams determine whether infrastructure costs scale efficiently with product usage. It reveals whether systems become more efficient as demand grows or whether architecture, utilization, or pricing strategies are causing infrastructure costs to rise faster than usage.

3. How do you calculate cloud unit economics?

Cloud unit economics is calculated by dividing the total infrastructure cost for a workload by the number of units produced during the same time period. For example, dividing monthly cloud spend by the number of API requests processed gives the cost per request.

4. What are common cloud unit economics metrics?

Common cloud unit economics metrics include cost per active user, cost per API request, cost per transaction, cost per workload, and cost per AI inference. The correct metric depends on how the product delivers value and how infrastructure supports that output.

5. What affects cloud unit economics the most?

Cloud unit economics is influenced by several factors including architecture efficiency, compute utilization, autoscaling behavior, infrastructure pricing models, and commitment coverage. Poor resource utilization or inefficient system design can significantly increase the cost of delivering each unit of product value.

6. How can companies improve cloud unit economics?

Organizations improve cloud unit economics by increasing infrastructure utilization, optimizing system architecture, refining autoscaling policies, and adopting more efficient cloud pricing models. Many teams also use FinOps tooling to automate commitment optimization and continuously track cost per workload metrics.

.png)