Amazon EC2 Pricing Explained

- Amazon EC2 pricing is based on instance type, region, operating system, and usage duration

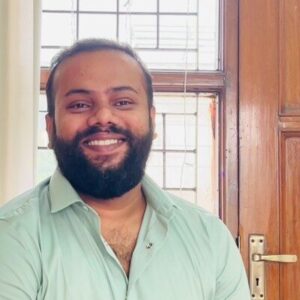

- There are four primary pricing models: On-Demand, Savings Plans, Reserved Instances, and Spot Instances

- Costs are influenced not just by pricing models, but by factors like resource utilization, data transfer, and infrastructure design

- Many teams overspend due to low commitment coverage and underutilized reserved capacity

- The most effective way to reduce EC2 costs is by optimizing coverage, utilization, and risk; not just selecting cheaper pricing options

What Is Amazon EC2 Pricing?

Amazon EC2 (Elastic Compute Cloud) pricing refers to how you are charged for running virtual machines in the cloud through Amazon Web Services.

EC2 allows you to rent computing capacity on demand instead of owning physical servers. You choose the type of machine you need, how long it runs, and where it’s located, and you’re billed accordingly.

Think of it this way. Amazon EC2 is like renting a fleet of computers in remote data centers. You pay for each computer based on how powerful it is and how long you use it.

- Need more capacity? Launch more instances

- Need less? Shut them down

- Billing adjusts based on usage

How Amazon EC2 Pricing Works

Amazon EC2 pricing is primarily usage-based, but there are several variables too that determine the final cost:

- Instance type: CPU, memory, and performance characteristics

- Region: Pricing varies depending on geographic location

- Operating system: Linux, Windows, and licensed software have different costs

- Usage duration: Per-second billing (for most Linux instances) or hourly pricing in some cases

Beyond Compute: What Pricing Actually Includes

While Amazon EC2 pricing is often thought of as “compute pricing,” the total cost of running workloads includes multiple components:

- Compute (instance runtime)

- Storage (attached volumes and snapshots)

- Data transfer (especially outbound traffic)

- Networking resources

This is why EC2 pricing can feel more complex than expected. You’re not just paying for a machine, but for the entire infrastructure around it.

Also read: AWS Savings Plan: A Complete Guide to Maximizing Savings

What You Actually Pay for in EC2: Full Cost Breakdown

When people think about Amazon EC2 pricing, they usually focus on the cost of running virtual machines. But in reality, your EC2 bill is made up of multiple components. Many of which are easy to overlook.

1. Compute (Instance Runtime)

This is the base cost of running Amazon EC2 instances. You’re charged based on:

- Instance type (CPU, memory, performance class)

- Runtime duration (per second or per hour)

- Pricing model (On-Demand, Savings Plans, Reserved Instances, Spot)

You pay for provisioned capacity, not actual utilization. For example, an instance running at 20% CPU still incurs 100% of its cost.

2. Storage (EBS Volumes and Snapshots)

Most Amazon EC2 workloads rely on Elastic Block Store (EBS) for persistent storage. You are billed for:

- Provisioned storage (GB/month)

- Performance tier (IOPS, throughput)

- Snapshots (backup storage over time)

Storage costs continue even if the instance is stopped. This makes EBS one of the most common sources of silent, persistent spend.

3. Data Transfer (Egress and Inter-Region Traffic)

Data transfer costs are frequently underestimated and can scale quickly.

- Inbound data (into AWS) is typically free

- Outbound data (to internet) is charged per GB

- Cross-region traffic incurs additional charges

In high-traffic systems, data transfer can become one of the top cost drivers, sometimes rivaling compute.

4. Networking Components

Beyond raw data transfer, EC2 relies on several networking services that add to total cost:

- Elastic IP addresses (charged when unused or detached)

- Load balancers (hourly + usage-based pricing)

- NAT gateways (notoriously expensive at scale)

These costs are often overlooked because they are distributed across services, not tied to a single instance.

5. Idle and Orphaned Resources

A significant portion of cloud waste comes from resources that are no longer actively used. Some common examples are detached EBS volumes, unused Elastic IPs, old snapshots and underutilized or forgotten instances.

These costs accumulate gradually and are rarely caught without active monitoring.

Also read: Introduction to Cloud Unit Economics: A Comprehensive Guide for DevOps and FinOps Teams

Why Amazon EC2 Bills Are Often Higher Than Expected

Even when pricing models are understood, total costs can still exceed expectations due to structural inefficiencies:

- Paying for Capacity Instead of Usage: Cloud pricing is based on what you provision and not what you consume. This may lead to overprovisioned instances, low utilization rates and inefficient spend per workload

- Fragmented Cost Visibility: Costs are distributed across multiple categories like Compute, Storage and Networking. This fragmentation makes it difficult to identify the true drivers of spend without detailed analysis.

- Misalignment Between Usage and Pricing Models: This falls under two common patterns. Heavy reliance on On-Demand results in consistently higher costs, while overcommitment to RIs/Savings Plans results in paying for unused capacity.

- Accumulation of Background Costs: Small, persistent resources (snapshots, IPs, idle volumes) often go unnoticed. They make them look minor individually, but they become collectively significant over time.

Amazon EC2 Pricing Models Explained & When to Use Each

Amazon EC2 pricing models are often explained as four simple options. But in practice, they behave more like layers in a system. Each model exists to solve a different problem, and the way they interact determines your actual cost.

1. On-Demand Pricing

On-Demand is the simplest model, where you launch instances, pay for what you use, and you can stop at any time. It’s designed for flexibility, and every AWS account starts here.

But in real environments, On-Demand quickly turns into the default layer that absorbs everything you didn’t plan for, like traffic spikes, new services, misconfigured workloads, and any usage not covered by commitments.

This is why many teams end up with a large portion of their infrastructure unintentionally running on On-Demand. And that’s where the cost problem begins.

2. Savings Plans

Savings Plans were introduced to reduce this inefficiency without forcing teams into rigid infrastructure decisions. Instead of committing to specific machines, you commit to a baseline level of spend.

In theory, this solves flexibility, but in practice, it introduces a challenge to accuracy. If your committed usage closely matches your actual usage, Savings Plans work extremely well. But if your infrastructure shifts, whether due to scaling, architectural changes, or seasonality, you start paying for capacity you no longer use.

So while Savings Plans reduce cost, they also introduce a dependency on your ability to predict future usage.

3. Reserved Instances (RIs)

Reserved Instances take this one step further. Instead of committing to spend, you commit to specific infrastructure.

This works well in environments where very little changes. Long-running workloads, stable systems, and predictable demand can benefit from the precision of Reserved Instances.

But modern cloud environments rarely stay static. Teams upgrade instance types, migrate workloads, or shift regions. When that happens, Reserved Instances can become misaligned with actual usage. At that point, the discount still exists, but the efficiency doesn’t.

Also read: AWS Savings Plans vs Reserved Instances: A Practical Guide to Buying Commitments

4. Spot Instances

Spot Instances operate on a completely different principle. Instead of committing to future usage, you take advantage of unused capacity in the present.

This can dramatically reduce costs, but it shifts responsibility to your system design. Your applications need to tolerate interruptions, recover quickly, and distribute workloads intelligently.

For the right workloads, this works extremely well. For the wrong ones, it introduces instability.

The Reality: These Models Are Not Alternatives, But Layers

In production environments, these pricing models are rarely used independently. They are stacked upon each other.

A typical system looks something like this:

- A baseline of predictable usage covered by Savings Plans or Reserved Instances

- A variable layer handled by On-Demand

- Opportunistic workloads running on Spot

The cost of your infrastructure is then determined by how much of your usage falls into each layer.

Where Most Teams Go Wrong

The common assumption is that choosing the “cheapest” pricing model leads to lower costs. But in practice, costs are driven by alignment, not selection.

- If too much usage falls into On-Demand, you overpay

- If commitments exceed actual usage, you waste spend

- If Spot is used without proper architecture, you risk instability

What matters is how closely your pricing strategy matches your workload behavior over time, and not which model you choose.

Amazon EC2 Pricing Example with Real-World Cost Breakdown

To understand how Amazon EC2 pricing behaves in practice, let’s look at a realistic scenario. The goal here is to build intuition for how pricing models impact total spend.

Scenario: A fairly typical baseline for a small-to-mid scale production system

Let’s assume a common setup:

- 10 × m5.large instances

- Running continuously (24/7)

- Linux-based workloads

- Deployed in a standard region (e.g., us-east-1)

At first glance, this shows how different pricing models lead to different savings levels. But these savings only apply to the portion of your usage that is actually covered by each model.

The Role of Coverage in This Example

Let’s take the same workload and change only one variable. Let’s say coverage.

Case 1: Low Coverage (40%)

- 40% of usage on Savings Plans

- 60% still on On-Demand

Even with commitments in place, a large portion of usage is still billed at full price. Total savings are limited.

Case 2: High Coverage (80%)

- 80% of usage on discounted pricing

- Only 20% on On-Demand

Now most of the workload benefits from lower rates, and total cost drops significantly.

Why This is More Important Than Pricing Model Choice

Most discussions around Amazon EC2 pricing focus on which model is cheapest. But in practice:

- A perfectly chosen pricing model with low coverage is still expensive

- A well-covered workload with a decent model is significantly cheaper

Now consider what happens if this workload changes. For example, traffic drops, instances are scaled down and architecture is modified.

If you’ve committed to a fixed level of usage, your actual usage decreases but your committed spend remains the same. This is where savings can turn into waste.

The example above shows that:

- Pricing models define potential savings

- Coverage determines realized savings

- Usage variability introduces risk

Also read: How to Identify Idle & Underutilized AWS Resources

What Drives EC2 Costs?

Amazon EC2 costs are not just determined by what you run, but by how your infrastructure behaves over time.

Even when teams understand Amazon EC2 pricing models, costs often behave in ways that feel unpredictable. Two environments using the same instance types and pricing strategies can end up with very different bills.

Instance Selection Inefficiencies

One of the most common sources of waste comes from how instances are sized. In practice, teams tend to overprovision. They choose larger instances “just to be safe” leaving headroom for peak traffic that rarely occurs.

But EC2 pricing doesn’t adjust based on utilization. If an instance is running at 20–30% capacity, you’re still paying for 100% of it. Over time, this gap between provisioned capacity and actual usage becomes a persistent cost inefficiency.

Region-Based Pricing Differences

EC2 pricing varies across regions, sometimes significantly. While this is well known, what’s often overlooked is how architectural decisions lock you into a region. That includes data residency requirements, latency constraints and service dependencies.

Once workloads are deployed, moving regions is rarely trivial. As a result, teams often continue operating in suboptimal pricing environments simply because migration is too complex.

Workload Variability

In most real-world systems, traffic fluctuates due to time of day, seasonality, product growth and unexpected spikes. This variability creates a constant mismatch between what you provision and what you actually use. And more importantly:

- What you committed to (in Savings Plans or RIs)

- What your system ends up consuming

This is one of the main reasons cost optimization is difficult because usage itself is not stable.

Overprovisioning as a Safety Mechanism

Overprovisioning is often intentional. Teams design systems to handle peak load, which means infrastructure is sized for worst-case scenarios and most of the time, it runs below capacity.

This tradeoff makes sense from a reliability perspective, but it introduces structural inefficiency in cost. You’re effectively paying for performance you only occasionally need.

Underutilized Commitments

Commitment-based pricing models (Savings Plans and Reserved Instances) are designed to reduce costs, but only when they are fully utilized. In practice, underutilization happens when:

- Usage drops below committed levels

- Workloads shift across services or instance types

- Systems are scaled down or re-architected

When this happens, the discount still exists, but it applies to usage that no longer exists. This is one of the least visible forms of waste, because it doesn’t show up as an obvious unused resource, but as missed value.

The Compounding Effect

What makes EC2 costs difficult to manage is not any single factor. It’s how these factors combine. A typical environment might simultaneously have:

- Slightly oversized instances

- Moderate traffic variability

- Partial commitment coverage

- A few underutilized resources

Individually, each issue seems small. Together, they create a compounding effect that drives costs higher over time.

The Most Misunderstood Concept in EC2 Pricing is Coverage

Most explanations of EC2 pricing focus on choosing between On-Demand, Savings Plans, and Reserved Instances. But, those choices only define how discounts are applied. They don’t determine how much you actually save. That depends on coverage.

What Is EC2 Coverage?

Amazon EC2 coverage is the percentage of your total compute usage that is billed under discounted pricing (Savings Plans or Reserved Instances) rather than On-Demand rates.

It is typically measured as:

Coverage (%) = (Usage billed under commitments ÷ Total compute usage)

For example, if 70% of your compute usage is covered by Savings Plans or RIs, the remaining 30% is billed at On-Demand rates.

How Coverage Works

AWS does not apply commitments to specific instances by default (especially with Savings Plans). Instead, discounts are applied dynamically to eligible usage up to your committed level.

This means:

- Your commitments create a discount pool

- AWS continuously applies that pool to matching usage

- Any usage beyond that pool is billed as On-Demand

So at any given time, your infrastructure is effectively split into two layers:

- Covered usage is discounted

- Uncovered usage is full priced

Why Coverage Determines Your Actual Cost

Pricing models define how much you could save. Coverage determines how much you actually save.

Two teams can both use Savings Plans, but:

- Team A covers 80–90% of their usage: most compute is discounted

- Team B covers 40–50%: a large portion still runs on On-Demand

Even with the same pricing model, their total costs will differ significantly.

Coverage is not something you set once. It fluctuates as your infrastructure changes. It shifts when traffic increases or decreases, instances are added, removed, or resized, new workloads are deployed and services move across regions or architectures.

Because commitments are fixed (e.g., $/hour), but usage is variable, the relationship between the two is constantly changing.

Also read: 7 AWS Savings Plan KPIs Every FinOps Team Should Track for Better Cost Efficiency

The Two Failure Modes of Coverage

Understanding coverage also means understanding how it fails.

1. Undercoverage (Too Little Commitment)

When coverage is low, a large portion of usage runs on On-Demand and potential savings are not captured. This is the most visible inefficiency which is high bills despite using “discounted” pricing models.

2. Overcoverage (Too Much Commitment)

When commitments exceed actual usage, discounts are applied to all available usage. The remaining committed capacity goes unused.

This unused portion still represents spend, meaning you’ve effectively prepaid for usage that didn’t occur.

Why Coverage Is Difficult to Optimize

Optimizing coverage requires aligning two things that behave very differently:

- Commitments: fixed over 1–3 years

- Usage: variable and constantly changing

Even small shifts in usage can create:

- Gaps (leading to On-Demand exposure)

- Excess (leading to underutilized commitments)

This makes coverage a moving target rather than a fixed optimization problem. A useful way to think about EC2 pricing is:

- Pricing models define discount rates

- Coverage determines how much usage receives those discounts

- Usage variability introduces risk in maintaining alignment

Why Savings Plans and RIs Don’t Always Deliver Expected Savings

Savings Plans and Reserved Instances underperform when committed usage and actual usage drift apart over time.

Savings Plans and Reserved Instances are designed to reduce EC2 costs by offering discounted pricing in exchange for commitment. On the surface, you commit to a certain level of usage, receive lower rates, and reduce overall spend.

But in real-world environments, the outcome is rarely that clean.

The effectiveness of these models depends on a single assumption, that future usage will closely match what was committed. This assumption holds in theory, but begins to break down as soon as infrastructure starts to evolve.

In most production systems traffic changes, services are added or removed, workloads are re-architected, and scaling patterns shift over time. As these changes accumulate, the relationship between committed usage and actual consumption begins to drift.

When usage falls below the committed level, the discounts continue to apply, but only to the usage that still exists. The remaining portion of the commitment has nothing to attach to. From a billing perspective, that unused portion simply stops generating value. You are still paying for the commitment, but no longer benefiting from it fully.

The opposite scenario creates a different kind of inefficiency. When usage grows beyond the committed level, the discounted pricing is applied only up to the commitment threshold. Everything beyond that point is billed at On-Demand rates. The result is a split system, where part of the infrastructure is optimized and the rest remains exposed to full pricing.

What makes this more complex is the mismatch in time horizons. Commitments are fixed over one- or three-year periods, while infrastructure decisions are made continuously. Even small changes, like resizing instances or shifting workloads can gradually misalign commitments from actual usage. This drift is rarely sudden, which makes it harder to detect, but over time it has a meaningful impact on cost efficiency.

There is also a difference in how various workloads behave. Compute workloads tend to be relatively stable, which makes them more suitable for longer commitments. In contrast, databases and other specialized services evolve more quickly, making long-term commitments harder to align with actual usage over time .

Also read: AWS Database Savings Plans Explained for DB Teams

The Real Tradeoff: Cost vs Flexibility vs Risk

Amazon EC2 pricing models look like a menu of options. But in practice, every decision around pricing is a tradeoff between cost, flexibility, and risk. You can optimize for one or even two of these, but not all three at the same time.

Cost Comes from Commitment

The lowest EC2 prices are always tied to commitment. Savings Plans and Reserved Instances offer significant discounts because they require you to commit to future usage. The more predictable your usage, the more aggressively you can commit, and the lower your effective cost becomes.

But that lower cost is exchanged for reduced flexibility and increased exposure to misalignment.

Flexibility Comes at a Premium

On-Demand pricing sits at the opposite end of the spectrum. It allows you to scale up or down at any time, change instance types freely, and adapt your infrastructure without constraints.

This flexibility is valuable, especially in fast-changing environments. But it comes at a consistently higher cost, because you are not making any long-term commitments.

In practice, flexibility acts as a buffer against uncertainty, but you pay for that buffer on every unit of usage.

Risk Is Introduced by Misalignment

Risk enters the system when commitments and actual usage diverge. If you commit too little, you leave a large portion of your infrastructure exposed to On-Demand pricing. This results in higher costs, but the inefficiency is visible and relatively easy to understand.

If you commit too much, the problem becomes less obvious. You are still paying for the commitment, but not all of it is being utilized. The unused portion appears as savings that were expected but never realized.

This is a different kind of risk of not overspending directly, but failing to convert commitments into actual savings.

Why You Can’t Maximize All Three

The relationship between cost, flexibility, and risk forms a natural constraint. Reducing cost requires increasing commitment. Increasing commitment reduces flexibility. Reduced flexibility increases the likelihood that usage and commitments will drift apart, which introduces risk.

Trying to eliminate risk entirely pushes you back toward On-Demand usage, which increases cost. Trying to minimize cost aggressively pushes you toward higher commitments, which increases risk. There is no configuration where all three are optimized simultaneously.

Also read: How to Choose Between 1-Year and 3-Year AWS Commitments

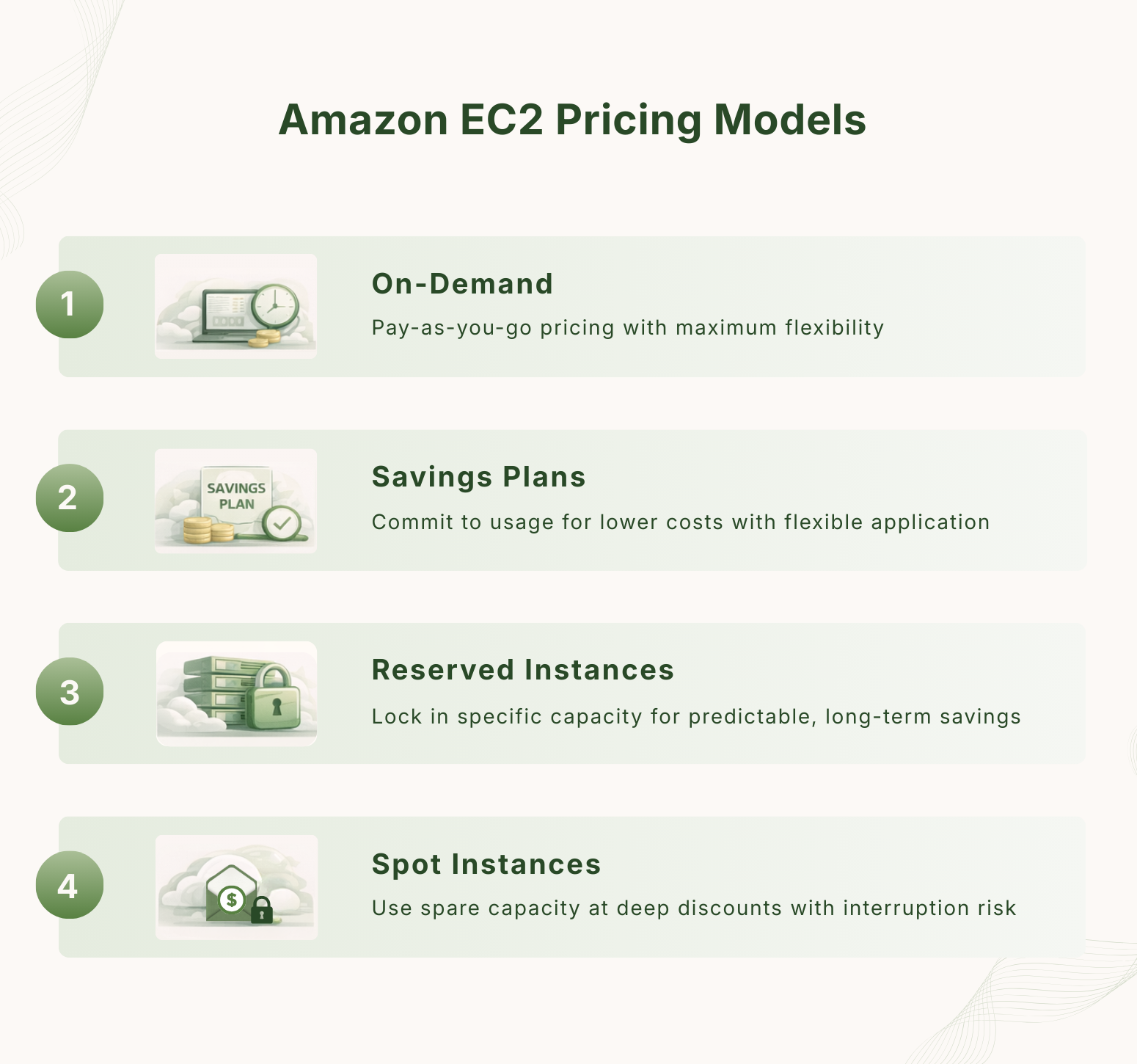

EC2 Cost Optimization Framework Used by FinOps Teams

Once teams understand EC2 pricing models, coverage, and the tradeoff between cost, flexibility, and risk, the challenge shifts from choosing the right option to maintaining alignment over time.

In most AWS environments, inefficiency comes from small gaps between usage and pricing that compound over time, especially when coverage and utilization drift out of sync.

1. Start with Coverage, Not Resource Reduction

Most teams begin optimization by rightsizing instances or shutting down unused resources. While useful, this usually delivers incremental savings. The larger opportunity comes from improving coverage.

In many environments, even after adopting Savings Plans, 30–50% of EC2 usage still runs on On-Demand pricing. Since On-Demand can be 30–60% more expensive, this uncovered portion becomes a major cost driver.

Increasing coverage from 50% to 75–80% often reduces total compute cost more than aggressive rightsizing, simply because more of the existing workload benefits from discounted pricing.

2. Balance Coverage with Utilization

Coverage alone does not guarantee savings. What matters is whether committed spend is actually used.

For example:

- A system with 80% coverage but only 70% utilization is still inefficient

- A system with 75% coverage and 90% utilization is typically more optimized

This is why FinOps teams track both:

- Coverage (%): how much usage is discounted

- Utilization (%): how much of the commitment is actually used

In well-optimized environments, these typically fall in the range of Coverage between ~70–85% and utilization between ~85–95%.

3. Align Commitments with Workload Stability

Not all workloads behave the same way, and treating them uniformly leads to misalignment. Stable workloads, such as long-running compute services can support higher commitment levels with lower risk. In contrast, workloads that scale frequently or evolve quickly introduce more uncertainty.

For example:

- A service consistently running at $10/hour baseline usage can safely support a $7–$9/hour commitment

- A workload fluctuating between $3/hour and $12/hour carries higher risk if overcommitted

This is why compute workloads often support longer commitments, while more dynamic services require flexibility .

4. Treat Pricing as a Portfolio

Mature environments do not rely on a single pricing model. Instead, they distribute usage across multiple layers.

A typical structure looks like:

- 60–80% covered by Savings Plans or Reserved Instances

- 10–30% on On-Demand for flexibility

- 0–20% on Spot for cost-sensitive workloads

This portfolio approach allows teams to capture baseline savings while maintaining enough flexibility to absorb changes without overcommitting.

5. Optimize Continuously, Not Periodically

One of the biggest gaps between theory and practice is how often optimization happens. Infrastructure changes daily, but optimization is often done monthly or quarterly. During that time, coverage and utilization drift.

Native recommendation systems can lag by several days , while usage can shift by 10–30% in short periods. Even a 5–10% misalignment between committed and actual usage can lead to meaningful inefficiency at scale.

6. Optimize for Alignment, Not Just Cost

The goal is to maintain alignment between Usage, Commitments and Pricing models

When alignment is strong:

- Coverage is high

- Utilization is high

- Savings are realized consistently

When alignment breaks:

- On-Demand exposure increases

- Or commitments become underutilized

- Both lead to higher effective costs over time.

Common Mistakes That Increase Amazon EC2 Costs

Most EC2 cost inefficiencies come from small decisions that seem reasonable in isolation but compound over time.

1. Treating On-Demand as the Default

Many teams start with On-Demand pricing for flexibility, which makes sense early on.

The problem is that it often remains the default even after workloads stabilize. Over time, large portions of infrastructure that could be covered by discounted pricing continue running at full rates.

In mature environments, it’s not uncommon to see 30–50% of usage still on On-Demand, even when baseline workloads are predictable. This creates a persistent cost premium that often goes unnoticed.

2. Overcommitting to Capture Maximum Discounts

On the opposite end, some teams aggressively adopt Savings Plans or Reserved Instances to maximize discounts. This works only if usage remains stable.

When infrastructure changes, which it almost always does, commitments can exceed actual usage. The unused portion doesn’t show up as idle infrastructure, but as commitments that are no longer fully utilized. This is one of the hardest inefficiencies to detect because it looks like optimization on paper.

3. Ignoring Coverage as a Metric

Many teams track total cost, but not how that cost is distributed across pricing models.

Without visibility into coverage:

- It’s unclear how much usage is discounted

- On-Demand exposure remains hidden

- Optimization efforts lack direction

As a result, teams may believe they are optimized simply because they use Savings Plans, without realizing that a significant portion of usage is still uncovered.

4. Focusing Only on Discounts, Not Utilization

Discount percentages are often the most visible part of EC2 pricing. But high discounts don’t guarantee savings.

A commitment offering 50–60% discount only delivers value if it is fully utilized. If utilization drops to 70–75%, a portion of that discount is effectively lost.

This is why focusing only on discount rates can be misleading. What matters is how much of that discounted capacity is actually used.

5. Treating Optimization as a Periodic Task

Cost optimization is often handled during monthly reviews or quarterly audits. By the time these reviews happen, infrastructure has already changed:

- New workloads have been deployed

- Existing ones have scaled or shifted

- Usage patterns have evolved

This delay allows misalignment between usage and commitments to accumulate over time.

6. Relying Solely on Static Recommendations

Native tools and basic dashboards provide useful guidance, but they are often based on historical snapshots. In fast-moving environments, recommendations can lag behind actual usage by several days.

During that time, decisions based on outdated data can reinforce inefficiencies instead of correcting them.

Also read: Cloud Waste in AWS: Causes, Examples & How to Eliminate It

Amazon EC2 Pricing Calculator: What It Is and How to Use It Correctly

The AWS Pricing Calculator is a web-based tool provided by Amazon Web Services to estimate the cost of running cloud infrastructure, including Amazon EC2.

It allows you to model a specific setup and calculate what that configuration would cost based on AWS pricing. You define:

- Instance type (e.g., m5.large, c5.xlarge)

- Region (e.g., us-east-1)

- Operating system (Linux, Windows, etc.)

- Usage pattern (hours per month)

- Additional components like EBS storage and data transfer

Based on these inputs, the calculator generates an estimated monthly or annual cost.

How It is Typically Used

The Amazon EC2 Pricing Calculator is most useful during planning and decision-making stages. Teams commonly use it for:

- Comparing instance types before deployment

- Estimating the cost of a new service

- Forecasting infrastructure spend for budgeting

- Evaluating different pricing models (On-Demand vs Savings Plans vs Reserved Instances)

For example, you might model:

- 5 × t3.medium instances for a staging environment

- 10 × m5.large instances for production

- Attached storage and expected data transfer

And get a rough monthly estimate to guide decisions.

What It Represents (and What It Doesn’t)

The key thing to understand is that the calculator represents a static configuration, not a live system.

It assumes the infrastructure stays the same, usage is consistent and the selected pricing model applies exactly as defined. This makes it accurate for estimating a known setup, but limited when applied to real-world environments where usage changes over time.

The calculator itself is not misleading, but it is often misinterpreted. Teams sometimes treat its output as a guaranteed monthly cost or a fully optimized pricing scenario. In reality, it is neither.

It does not account for:

- Changes in usage over time

- Partial coverage across workloads

- Underutilization of commitments

So while the numbers are correct for the inputs given, they do not reflect how costs behave once infrastructure is running and evolving.

How Modern Platforms Approach EC2 Optimization

As EC2 environments grow in scale and complexity, manual optimization becomes increasingly difficult to maintain. This has led to the emergence of tools and platforms designed to manage cloud costs more systematically.

These platforms don’t replace AWS pricing models, they operate on top of them. Their role is to continuously align usage, commitments, and pricing decisions in environments where conditions change frequently.

Modern cloud cost optimization platforms focus on three core functions:

1. Continuous Usage Analysis

Instead of relying on periodic reviews, these systems continuously analyze usage patterns across accounts, services, and regions.

They track instance-level usage, historical trends and variability in demand. This allows them to identify how stable or volatile different parts of the infrastructure are.

2. Dynamic Commitment Optimization

Rather than treating Savings Plans or Reserved Instances as one-time decisions, these platforms model them as adjustable strategies.

They evaluate how much usage should be committed, which type of commitment fits best and how changes in usage affect existing commitments. The goal is to keep coverage and utilization aligned over time, rather than optimizing once and revisiting later.

3. Real-Time Feedback Loops

One of the main differences from native tooling is frequency. Instead of working on delayed or static recommendations, these systems operate with shorter feedback cycles, allowing decisions to reflect more recent usage patterns.

This helps reduce the gap between what the system is doing and what the pricing strategy assumes.

Traditional optimization approaches are typically manual, periodic (monthly or quarterly) and based on historical snapshots. Modern platforms shift this toward continuous cost analysis, and automated or assisted decision-making.

Emerging Model Around Flexible Commitments

Traditional EC2 pricing models are built on a simple tradeoff: the more you commit, the more you save. But as infrastructure becomes more dynamic, maintaining alignment between committed usage and actual usage becomes increasingly difficult.

This has led to the emergence of flexible or assured commitment approaches, which aim to preserve discounted pricing while reducing the downside of misalignment. Instead of assuming perfect usage, these models are designed to handle variability more effectively over time.

In traditional setups, unused commitments result in lost value, and excess usage falls back to On-Demand pricing. Flexible approaches attempt to reduce this gap, either by adapting how commitments are applied or by offsetting underutilization in some form. Usage.ai implements this through mechanisms such as automated commitment optimization and cashback-backed models, where a portion of unused commitments can be returned as real value rather than fully lost.

As cloud environments continue to evolve, this reflects a broader shift from optimizing for static usage assumptions to building pricing strategies that remain effective even as usage changes.

Frequently Asked Questions

1. What is Amazon EC2 pricing per hour?

Amazon EC2 pricing per hour varies based on instance type, region, and operating system. For example, a general-purpose instance like m5.large may cost around $0.08–$0.10 per hour on On-Demand pricing in common regions, while discounted pricing models can reduce this significantly.

2. What affects EC2 pricing the most?

The main factors affecting EC2 pricing are instance type, region, operating system, and pricing model. However, in practice, the biggest cost drivers are coverage and utilization, which determine how much of your usage is billed at discounted rates versus On-Demand pricing.

3. What is the cheapest EC2 pricing model?

Spot Instances are typically the cheapest EC2 pricing model, offering discounts of up to 90% compared to On-Demand rates. However, they can be interrupted at any time, making them suitable only for fault-tolerant or non-critical workloads.

4. Are Savings Plans worth it?

Savings Plans are worth it when your usage is stable and predictable. They can reduce compute costs by up to ~66%, but their effectiveness depends on how well your committed usage matches actual usage over time. Misalignment can reduce realized savings.

5. What is EC2 coverage?

EC2 coverage is the percentage of your total compute usage that is billed under discounted pricing models like Savings Plans or Reserved Instances. Higher coverage generally leads to lower costs, as more of your usage avoids On-Demand pricing.

6. Why is my EC2 bill so high?

EC2 bills are often high due to a combination of factors such as low coverage, overprovisioned instances, data transfer costs, and underutilized commitments. Even when using discounted pricing, a large portion of usage may still be billed at On-Demand rates.

7. Can Reserved Instances be canceled?

Reserved Instances generally cannot be canceled once purchased. However, some types can be modified or sold on the AWS Reserved Instance Marketplace, depending on the configuration and region.

8. What happens if I don’t use my commitment?

If you don’t fully use your Savings Plan or Reserved Instance commitment, the unused portion does not carry over—it simply results in lost value. You still pay for the commitment, but only the utilized portion generates savings.

9. Is EC2 cheaper than on-premise infrastructure?

EC2 can be cheaper than on-premise infrastructure when usage is optimized and scaled efficiently. However, costs can exceed on-premise setups if resources are overprovisioned, poorly utilized, or heavily reliant on On-Demand pricing.

10. How can I reduce EC2 costs?

You can reduce EC2 costs by increasing coverage with Savings Plans or Reserved Instances, improving utilization of committed resources, rightsizing instances, and using Spot Instances where appropriate. Continuous monitoring and adjustment are key to maintaining efficiency.

.png)